|

11/13/2023 0 Comments Meta learning algorithms

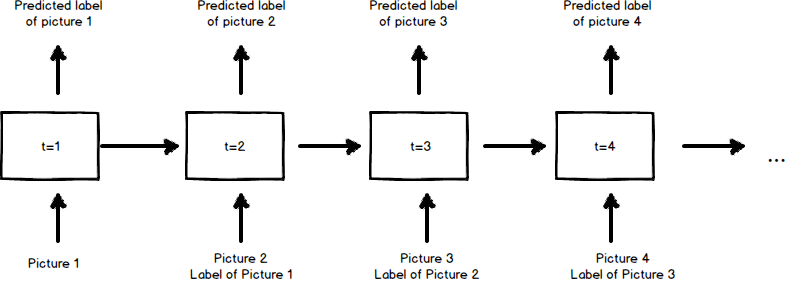

Finally, we propose practical interventions such as biasing the training distribution that improve the meta-training and meta-generalization of general purpose learning algorithms. Split the dataset into training and testing sets. We further show that the capabilities of meta-trained algorithms are bottlenecked by the accessible state size determining the next prediction, unlike standard models which are thought to be bottlenecked by parameter count. The entire algorithm for meta learning the robot is: Collect a dataset of each object with various shapes and textures, and for each object, specify the correct location to place it. We characterize phase transitions between algorithms that generalize, algorithms that memorize, and algorithms that fail to meta-train at all, induced by changes in model size, number of tasks used during meta-training, and meta-optimization hyper-parameters. Meta-learning promises to leverage the experience gained on previous tasks to train models faster, with fewer examples, and possibly better performance. We propose an algorithm for meta-learning that is model-agnostic, in the sense that it is compatible with any model trained with gradient descent and applicable to a variety of different learning problems, including classification. Chelsea Finn, Pieter Abbeel, Sergey Levine. In this paper we show that Transformers and other black-box models can be meta-trained to act as general purpose learning algorithms, and can generalize to learn on different datasets than used during meta-training. Meta-learning algorithms with memory, which we expect will perform better, may perform best with different task proposal mechanisms Scaling unsupervised meta learning to leverage large-scale datasets and complex tasks holds the promise of acquiring learning procedures for solving real-world problems more efficiently than our current learning. Model-Agnostic Meta-Learning for Fast Adaptation of Deep Networks. Experiments, both with articial and real-world databases, show that landmarking selects, with moderate but reasonable level of success, the best performing of a. Google, Meta, and others test their algorithms for bias using standardized skin tone scales. A general purpose learning algorithm is one which takes in training data, and produces test-set predictions across a wide range of problems, without any explicit definition of an inference model, training loss, or optimization algorithm. One particularly ambitious goal of meta-learning is to train general purpose learning algorithms from scratch, using only black box models with minimal inductive bias. Meta-learning, or learning-to-learn, instead aims to learn those aspects, and promises to unlock greater capabilities with less manual effort. Experiments on regression problems are conducted to verify our theoretical results.Abstract: Modern machine learning requires system designers to specify aspects of the learning pipeline, such as losses, architectures, and optimizers. Finally, we obtain a generalization bound for meta learning with dependent episodes whose dependency relation is characterized by a graph. Supposing the $n$ training episodes and the test episodes are sampled independently from the same environment, previous work has derived a generalization bound of $O(1/\sqrt/n)$ with the curvature condition of loss functions. We study the convergence of a class of gradient-based Model-Agnostic Meta-Learning (MAML) methods and characterize their overall complexity as well as their. However, the adopted meta-learning algorithm (iMAML) showed only marginal performance gain when trained with tasks comprised of instances from various medical categories. As more tasks become available, a new task can be learned better than the last. Model agnostic meta-learning (MAML) was proposed by Finn et al. The support/query episodic training strategy has been widely applied in modern meta learning algorithms. Meta learning is commonly viewed as learning a general-purpose learning algorithm that can generalize across tasks. Meta-learning, on the other hand, is designed explicitly around constructing tasks and algorithms for generalizable learning. Meta-Learning: Theory, Algorithms and Applications is a great resource to understand the principles of meta-learning and to learn state-of-the-art meta-learning algorithms, giving the student, researcher and industry professional the ability to apply meta-learning for various novel applications.

Jiechao Guan, Yong Liu, Zhiwu Lu Abstract

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed